The five-minute loop, explained

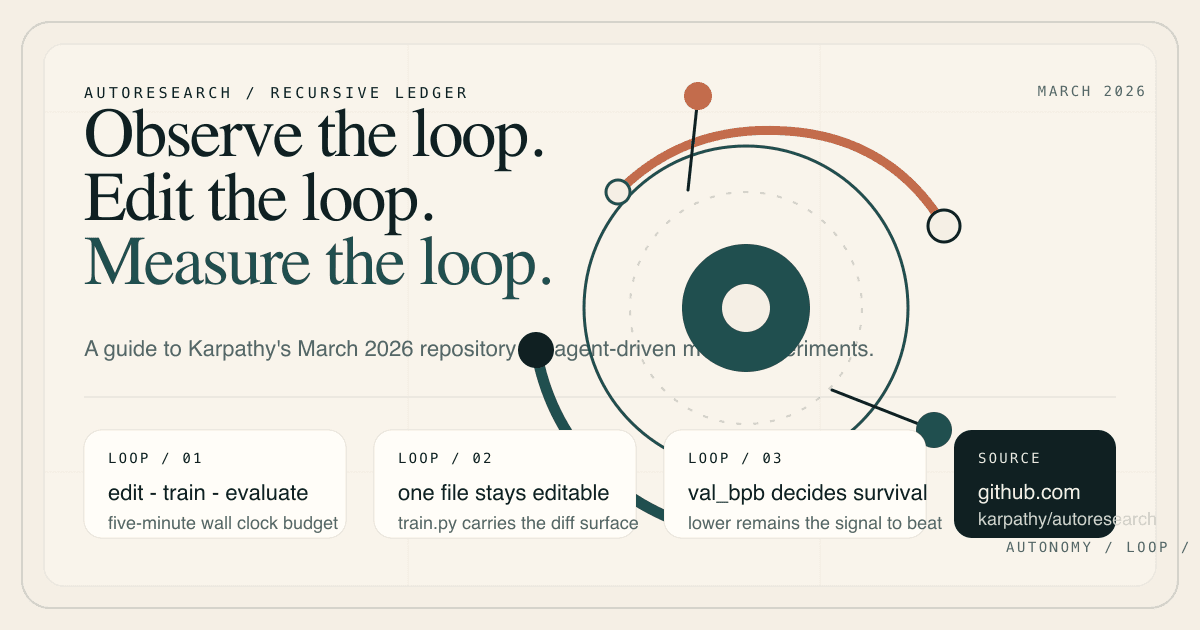

Autoresearchis Karpathy's five-minute loop for autonomous AI research.

An agent edits train.py, runs a short experiment, checks val_bpb, and keeps only the ideas that win. This page shows what the repo does, why the constraint matters, and how to get to a first run.

Creator

Andrej Karpathy

Launch

March 2026

Core loop

5-minute experiments

Overview

What makes autoresearch different from a normal training repo

How it works

The loop stays small so each improvement is easy to judge

One editable training file, one fixed wall-clock budget, and one metric keep the system narrow enough to inspect and strong enough to iterate.

Quick start

How to go from reading about autoresearch to running it

The README assumes a single NVIDIA GPU, Python 3.10+, and the `uv` package manager. If that matches your setup, this is the shortest path to a first run.

FAQ

The fastest answers to the questions people ask first

Start here if you want the creator, the loop, the metric, or the hardware requirements without reading the whole repo first.

Primary sources

Every claim on this page is grounded in the repo README or linked public discussion so you can verify the details yourself.